397 Billion Parameters. Running on a Laptop. Yes, Just a Laptop.

Stop for a second.

Because what you’re about to read might change how you think about the future of artificial intelligence.

While big tech companies spend millions every year running massive AI models inside warehouse-sized data centers, one person just ran one of the world’s largest models on a regular MacBook Pro you can buy off the shelf at an Apple Store.

- No cloud.

- No GPU cluster.

- No PyTorch.

- Not even Python.

Just C and Metal.

The project that broke all the rules: flash-moe

This is an open-source inference engine capable of running Qwen3.5-397B directly on a MacBook Pro with 48GB of RAM.

The results?

- 4.4 tokens per second

- Full tool calling support

- Real-world, production-ready performance

- Completely offline operation

Why does this sound impossible?

The numbers alone should make any engineer do a double-take:

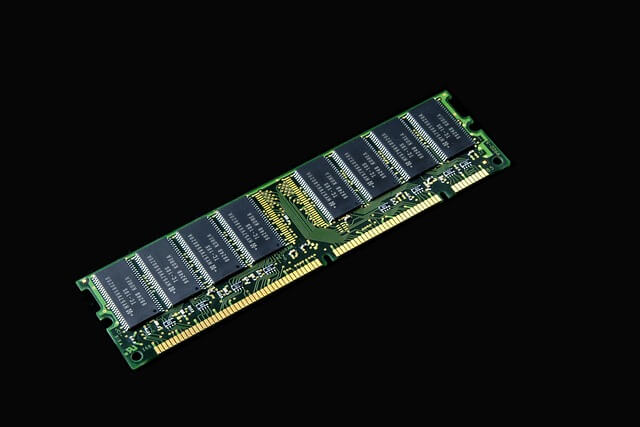

- Model size: 209GB

- Device RAM: 48GB

- Actual memory used during inference: 5.5GB

How is that even possible?

The secret lies in MoE architecture—Mixture of Experts.

Instead of loading all 512 experts at once, the system:

- Loads only 4 experts per token

- Streams the model directly from SSD in real-time

- Runs hand-optimized kernels written in Metal shaders

The result? A model that would normally require a data center… running on a personal laptop.

Here’s the wildest part.

This project wasn’t built by Google.

Not by OpenAI.

Not by NVIDIA.

It was built by one person.

A side project, in fact, by the VP of AI at CVS Health.

He used Claude Code as a coding partner and shipped:

- Roughly 7,000 lines of C

- Around 1,200 lines of Metal shaders

- 58 manual optimization passes

- All in about 24 hours

Why this actually matters

Running a 397B parameter model on cloud GPUs costs:

- Hundreds of dollars per hour

- Millions per year for enterprise use

Now?

- A $3,499 laptop

- 100% private, local execution

- No API keys

- No subscriptions

- Unlimited use, forever

The bigger picture

We’re not just looking at another cool GitHub repo.

We’re watching the beginning of a real shift:

- AI power moving from corporations to individuals

- The end of infrastructure monopolies

- The rise of personal AI supercomputers

The question isn’t “Do I need the cloud to run serious AI anymore?”

It’s “How long until every laptop is its own AI data center?”

Where to find it

The project is already trending on GitHub.

It’s topping Hacker News.

And it’s fully open source.

If you care about privacy, local-first tools, or just love seeing what’s possible when smart engineering meets consumer hardware—this one’s worth your time.

No hype. No gatekeeping. Just code that works.

Written by Hazem Abbas. I write about AI, privacy, Linux, and tools that put control back in your hands. If this resonated, share it with someone who still thinks big AI requires big infrastructure.