The “Golden Age” of AI felt like magic. I remember sitting in a coffee shop in Ankara with a group of friends, a mix of fellow developers and medical colleagues, and the air was thick with awe. One friend, a radiologist, showed me how a simple prompt could summarize a 50-page clinical trial in seconds. Another, a frontend dev, was giddy because he’d just “written” a whole React component without touching a semicolon.

But last week, that same group was singing a different tune. “It’s getting dumber,” the developer grumbled, staring at a block of hallucinated code. “It’s like it lost its spark,” the radiologist added. “It used to give me these creative, sweeping insights. Now? It feels… pedantic. It feels like it’s following a checklist rather than thinking.”

They aren’t alone. If you spend enough time on social media or in dev forums, the consensus seems to be that AI is “degrading.” But as someone who looks at the world through both a stethoscope and a terminal, I’ve realized the truth is much more nuanced.

AI isn’t getting “stupid.” It’s getting specific. And if you don’t adjust your stride, you’re going to get left behind.

From “General Magic” to “Mechanical Precision”

When Large Language Models (LLMs) first hit the mainstream, they were trained to be generalists. They were “eager to please,” often hallucinating creative answers just to satisfy the user’s intent. It was the “GenAI Honeymoon.”

However, as we move into 2026, the architecture has shifted. We’ve moved from raw, unbridled generation toward RLHF (Reinforcement Learning from Human Feedback) that prioritizes safety, factuality, and instruction-following.

In the medical world, we call this a “narrowing of the differential.” At first, a medical student knows a little bit about every disease, they are creative and broad. But as they become specialists, they become highly focused. They stop guessing; they start verifying. AI is undergoing the same professionalization. It’s no longer a “chatbot”; it’s becoming a “reasoning engine.”

Why It Feels “Stupider” (The Complexity Trap)

There are three technical reasons why the AI feels like it’s losing its edge:

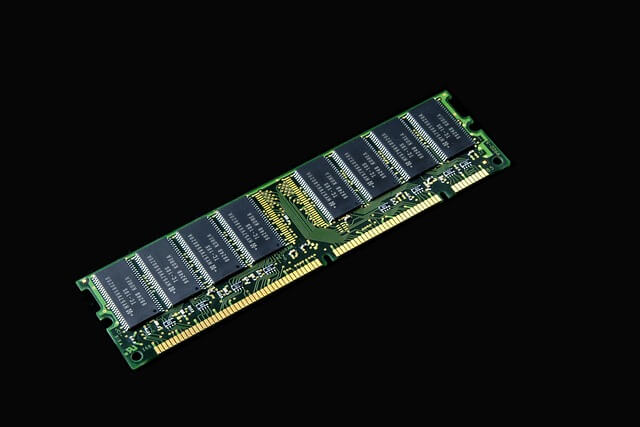

1- Quantization and Optimization:

To make these models faster and cheaper to run, developers use techniques like quantization (reducing the precision of the model’s weights). This makes the AI 10x faster, but it can shave off the “creative ” fringes of its “thought” process.

2- Safety Guardrails:

Every time an AI is “aligned” to prevent it from giving dangerous medical advice or generating biased code, a bit of its flexibility is sacrificed. It’s like a doctor who is so afraid of a lawsuit that they only give the most conservative, boring advice possible.

3- The End of the “First-Time” High:

Our expectations have skyrocketed. In 2023, a poem about a toaster was amazing. In 2026, we expect the AI to architect a multi-tenant SaaS with perfect TypeScript definitions. When it misses a single line, we call it “stupid.”

How to Benefit: The “Specialist” Mindset

If the AI is becoming more specific, your prompting must become more structural. You can no longer just “chat” with the AI; you have to “engineer” with it. Here is how to keep up:

1. Shift from “Ask” to “Architect”

In my development work, I no longer ask the AI to “Write a function.” I provide it with a Context Window that includes my existing database schema, my coding standards (e.g., “Use Laravel 11 syntax”), and the specific edge cases it needs to handle.

2. The Multi-Model Strategy

Don’t rely on one “brain.” For my medical writing, I use one model for brainstorming (the creative generalist), a second for fact-checking (the specific reasoner), and a third (like a specialized medical LLM) for clinical accuracy.

3. Use “Chain of Thought” Explicitly

Since AI is now more focused on logic, you need to trigger that logic. Instead of asking for a result, tell it: “Think step-by-step. Analyze the contraindications of these two drugs, then evaluate the patient’s kidney function, and finally suggest a dosage.” This forces the AI to use its “new” specificity to your advantage.

Progressing to Keep Up: The Human-AI Symbiosis

As a “Nomad of words and code,” I’ve learned that the “stupidity” we perceive is actually a reflection of our own stagnant skills. If the tool becomes more precise, the craftsman must become more skilled.

We have written dozens of resources on patient safety and quality satisfaction, and the recurring theme is always the same: Precision saves lives. In medicine, “specific” is a compliment. It means we aren’t guessing.

The future of AI is not about a “God-box” that answers everything perfectly with one click. It’s about a suite of highly specific “Agents.” One agent for your billing, one for your clinical notes, and one for your codebase.

The Action Plan for 2026

- Audit Your Prompts: If you are using the same prompts you used two years ago, you are the bottleneck. Start using System Instructions and specialized “Personal Context” tools.

- Embrace the “Boring” Accuracy: Be glad the AI is no longer “guessing” your medical dosages or your database queries. Precision is the foundation of scale.

- Keep the “Human” in the Loop: AI is the engine, but you are the steering wheel. As it gets more specific, your job is to provide the “Broad Vision” that only a human, a doctor, a developer, a father, a writer, can provide.

AI isn’t getting dumber. It’s growing up. It’s time we did the same.

FAQs

1. Why does the AI sometimes repeat itself?

This is often a result of “over-alignment.” The model is so focused on being “safe” and “correct” that it sticks to a narrow path of proven responses. To fix this, try asking it to “approach the problem from a contrarian perspective.”

2. Is “Model Collapse” real?

There is a theory that AI training on AI-generated data makes it “stupider.” While possible, major labs (Google, OpenAI, Anthropic) use “synthetic data curation” to prevent this. Most of what we perceive as “collapse” is actually just stricter safety filtering.

3. How can I make AI more creative again?

Increase the “Temperature” setting if you have API access, or explicitly tell the AI: “I want a high-variance, creative response. Ignore standard formatting and explore experimental ideas.”

4. Does this mean I need to learn to code to use AI?

Not necessarily, but you need to learn Logic. Understanding how a system works (the if/then/else of the world) is becoming more important than knowing a specific programming language.

5. Why is AI better at specific tasks like medical coding or law now?

Because we are moving toward Fine-Tuning. Models are being “schooled” on specific textbooks and legal cases, making them “narrow experts” rather than “wide guessers.”